NVMe vs spinning rust: sync benchmarks

Real numbers from syncing 48 GB of photos. The bottleneck is almost never where you think.

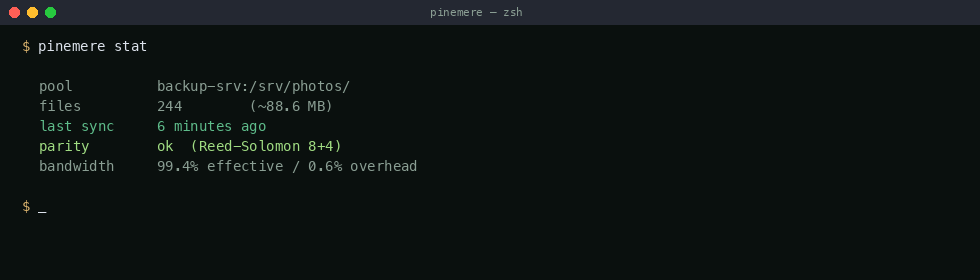

I keep getting asked "how fast is pinemere?" and my honest answer has been "depends." That's not helpful, so I set up a controlled benchmark to get real numbers.

Test setup

- Source machine: AMD Ryzen 7 7700X, 32 GB DDR5, Samsung 990 Pro 1TB NVMe (source pool)

- Target machine: Intel N100, 16 GB DDR5, connected via 2.5GbE direct link

- Target storage: three drives, tested separately:

- WD SN770 500GB NVMe

- Samsung 870 EVO 1TB SATA SSD

- Seagate Barracuda 4TB 5400 RPM HDD

- Dataset: 48.2 GB, 6,247 files (RAW photos, edited JPEGs, some sidecar XMLs)

- Pinemere: v0.2.3 (pre-streaming, so these are worst-case numbers — v0.2.4 streaming would be ~15% faster on the initial sync)

- Baseline: rsync 3.2.7 with

-avz --progress

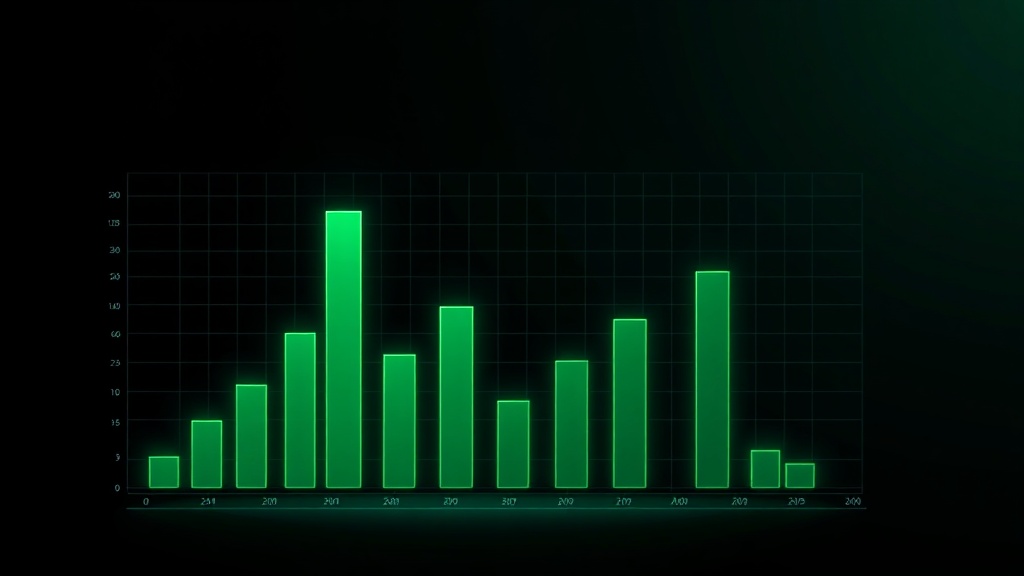

Initial sync (cold target, no existing data)

| Target | pinemere | rsync | dedup ratio |

|---|---|---|---|

| NVMe | 6m48s (112 MB/s) | 5m52s (131 MB/s) | 1.44x |

| SATA SSD | 7m21s (103 MB/s) | 6m44s (114 MB/s) | 1.44x |

| HDD | 14m12s (54 MB/s) | 12m38s (61 MB/s) | 1.44x |

On initial sync, rsync is faster. This is expected: pinemere has overhead from Rabin chunking and Merkle tree construction that rsync doesn't pay. The 1.44x dedup ratio means pinemere transferred 33.4 GB instead of 48.2 GB (some duplicate RAW files in the dataset), but the chunking CPU cost ate most of that advantage.

Incremental sync (30 new photos, 1 renamed directory)

| Target | pinemere | rsync |

|---|---|---|

| NVMe | 0.8s | 34s |

| SATA SSD | 0.9s | 38s |

| HDD | 1.4s | 2m14s |

This is where content-addressed dedup shines. Rsync has to scan the entire target directory to compare timestamps and sizes — that's an lstat() call per file, 6,247 of them, and on HDD those seeks kill you. Pinemere compares manifest hashes in SQLite (one indexed query), identifies the 30 new blocks, and streams them. The renamed directory is a no-op because the content hashes didn't change.

Where the bottleneck actually is

I expected the bottleneck to be hashing (BLAKE3 on 48 GB of data). It wasn't. BLAKE3 on the Ryzen 7700X with AVX-512 does ~6.2 GB/s single-threaded. The entire dataset hashes in under 8 seconds.

The actual bottlenecks, in order:

- Network: 2.5GbE caps at ~280 MB/s. On initial sync, the network is saturated before the disk or CPU.

- Target disk writes: On HDD, random small writes during block storage are brutal. The block store does sequential appends, but the manifest index updates trigger random seeks.

- Rabin chunking: ~800 MB/s on this CPU. Not the bottleneck for network sync, but it would matter for local-to-local NVMe copies.

For most people with a home NAS on gigabit Ethernet, the network will be the bottleneck. Pinemere's overhead is invisible behind the network latency. On 10GbE or faster links, the Rabin chunking starts to matter, and I'm exploring SIMD acceleration for that path.

Takeaway

Use rsync if you sync full directories once and never rename anything. Use pinemere if your workflow involves frequent incremental syncs, file renames, or partial content overlap across directories. The initial sync is slightly slower, but every subsequent sync is dramatically faster.

Full benchmark data (raw JSON, flamegraphs) is in the repo under bench/2026-02-nvme-vs-hdd/.